Those are Engadget’s words, not mine as this Microsoft Research project was shown at SIGGRAPH this week.

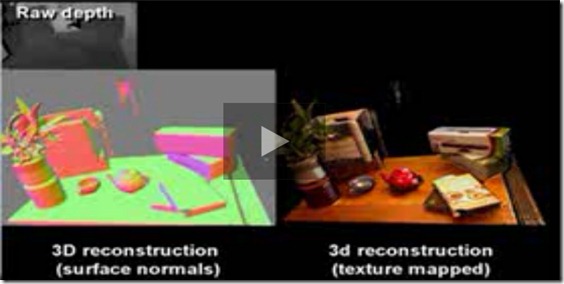

KinectFusion takes live depth data from a moving Kinect sensor and in real-time creates high-quality 3D models. The possibilities are huge – you can scan a whole room and its contents within seconds and as you move around, and objects are revealed they are fused into a single 3D model. My mind is immediately thinking of applications in architecture, gaming and augmented reality.

I’ll have more on this soon, but for now, check out the video and pick up your jaw at the end. The part where the sensor picks up the Dell monitor logo, geeky as it is, showed how accurate this is and the simulated objects in real time at around 3:50 in the video is amazing. It’s not just me who thinks so…check the commentary on Twitter.