Real-time AI: Microsoft announces preview of Project Brainwave

Every day, thousands of gadgets and widgets whish down assembly lines run by the manufacturing solutions provider Jabil, on their way into the hands of customers.

Along the way, an automated optical inspection system scans them for any signs of defects, with a bias toward ensuring that all potential anomalies are detected. It then sends those parts off to be checked manually.

The speed of operations leaves manual inspectors with just seconds to decide if the product is really defective, or not.

That’s where Microsoft’s Project Brainwave could come in. Project Brainwave is a hardware architecture designed to accelerate real-time AI calculations. The Project Brainwave architecture is deployed on a type of computer chip from Intel called a field programmable gate array, or FPGA, to make real-time AI calculations at competitive cost and with the industry’s lowest latency, or lag time. This is based on internal performance measurements and comparisons to other organizations’ publicly posted information.

At Microsoft’s Build developers conference in Seattle this week, the company is announcing a preview of Project Brainwave integrated with Azure Machine Learning, which the company says will make Azure the most efficient cloud computing platform for AI.

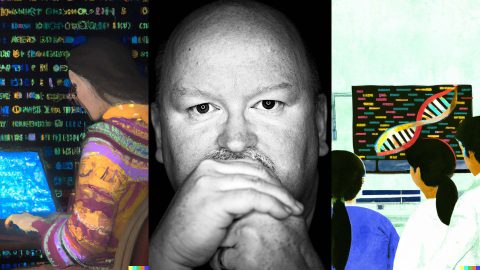

Mark Russinovich, chief technical officer for Microsoft’s Azure cloud computing platform, said the preview of Project Brainwave marks the start of Microsoft’s efforts to bring the power of FPGAs to customers for a variety of purposes.

“I think this is a first step in making the FPGAs more of a general-purpose platform for customers,” Russinovich said.

Jabil is working with Microsoft to look at how it could use Project Brainwave to quickly and accurately use AI to scan images and flag false positives – or items that aren’t actually defective. That more detailed analysis will free up the people who manually check for defects to focus on the more complex cases.

All those seconds saved add up, said Ryan Litvak, Jabil’s IT manager, as manufacturing customers are always eager to find any efficiency gains they can pass on to their customers.

“It’s highly competitive, so anything you can do to get an advantage for the customer is going to provide incremental improvement,” he said.

The Project Brainwave preview includes the ability for customers to do ultra-fast image recognition for applications such as the one Jabil is piloting, and it lets people do AI-based computations in real time, instead of batching it into smaller groups of separate computations. It works on TensorFlow, one of the most commonly used frameworks for doing AI calculations using deep neural networks, a method that is roughly modeled on theories about how the brain works. In addition, Microsoft is working on building the capability to support Microsoft Cognitive Toolkit, another popular framework for deep learning.

Microsoft also is announcing a limited preview to bring Project Brainwave to the edge, meaning customers could take advantage of that computing speed in their own businesses and facilities, even if their systems aren’t connected to a network or the Internet.

“We’re making real-time AI available to customers both on the cloud and on the edge,” said Doug Burger, a distinguished engineer at Microsoft who leads the group that has pioneered the idea of using FPGAs for AI work.

For Jabil, Litvak said the effort to make Project Brainwave available on the edge, which would allow his company to put it directly in manufacturing facilities, is key to making it practical and profitable to deploy across all its operations.

Jabil is one of the largest and most technologically advanced manufacturing solutions providers in the world. Their customers come to them at every stage of their business plans, from an idea on a napkin to a full-fledged product ready for assembly, but they all have one thing in common – the need to operate as efficiently and cost-effectively as possible. That means the ability to save even a few milliseconds of time, and a little bit of money, by not sending each calculation into the cloud adds up.

Litvak said the ability to bring Project Brainwave to the edge could allow Jabil to scale beyond the pilot and potentially bring the capability to all its manufacturing operations.

Jabil also is looking at ways to use AI on Project Brainwave to better predict when manufacturing operations need maintenance, as a way to cut downtime.

From research to product

The public preview of Project Brainwave comes about five years after Burger, a former academic who works in Microsoft’s research labs, first began talking about the idea of using FPGAs for more efficient computer processing. As he refined his idea, the current AI revolution kicked into full gear. That has created a massive need for systems that can process the large amounts of data required for AI systems to do things like scan documents and images for information, recognize speech and translate conversations.

Burger says Project Brainwave is perfect for the demands of AI computing. Project Brainwave’s hardware design can evolve rapidly and be remapped to the FPGA after each improvement, keeping pace with new discoveries and staying current with the requirements of the rapidly changing AI algorithms.

An FPGA also can be quickly reprogrammed to respond to new advances in AI, making it more flexible in such a fast-changing field than other types of computer chips.

The demand for systems that can handle AI workloads quickly and at reasonable cost is only expected to grow. That’s because companies are looking at more sophisticated uses of AI, such as to analyze unstructured data like videos, and they are developing more sophisticated AI algorithms that can do things like search those videos for all footage of cities near oceans.

“We’re going to need a lot more computing power,” Burger said.

From Bing and Azure to all businesses

FPGAs weren’t new when Burger and his team started exploring the idea that led to Project Brainwave, but until then no one had seriously considered them for large-scale computing. So the team set out to prove that they could be used in more practical scenarios.

Burger and his team found partners for their project, initially called Project Catapult, in the Bing search engine and Azure cloud teams.

Eric Chung, a senior researcher in Microsoft’s Silicon Systems Futures group and the technical lead on Project Brainwave, describes Bing as “constrained by low latency.” That’s a technical way of saying that when Bing customers type in a search query, they expect nearly instantaneous results.

That means that Bing engineers are constantly looking for ways to improve the quality of their results without giving up even a millisecond of speed – at a time when the amount and types of data search engines cull through is only growing.

Using FPGAs, the team was able to quickly incorporate deep neural network-based search technology into Bing, vastly speeding up the system’s ability to serve up results.

“That was only possible because it was done in programmable hardware,” said Steve Reinhardt, a partner hardware engineering manager and a leader of Bing’s hardware acceleration efforts.

Now, Ted Way, a senior program manager in Azure Machine Learning, said the programmable hardware has another big advantage – it will be easy to reprogram it as new innovations come along, whereas other systems may require you to update the hardware, which could take months or years.

Azure also has been using FPGAs for the past several years to accelerate Azure’s cloud network.

Real-time AI for geospatial analysis

For many customers, the biggest potential benefit from Project Brainwave is the ability to do AI analysis on the fly, in real time.

On any given day, the geospatial analytics company Esri might be doing real-time traffic analysis, helping a nonprofit categorize land into segments such as buildings, canopies and water, or predicting the arrival times of thousands of vehicles.

All of those projects require the company to analyze a wide variety of disparate data, from sources such as satellite imagery, video feeds and sensors. While there are methods for incorporating these data feeds, the intensive computation involved can often lead to delays.

By using AI, Omar Maher, Esri’s practice lead for advanced analytics, said they’ve been able to quickly do more in-depth and precise analysis than ever before. That’s allowing Esri users to focus on higher-value work such as providing sophisticated recommendations to their stakeholders.

“It’s saving our customers time and effort and money, and it’s enabling them to focus on the really important stuff,” Maher said.

As AI tools improve, Maher said the company is only seeing an increase in the need to process massive amounts of data in real time. For example, the company might want to extract information from thousands of video feeds to detect cars, bicycles, buses, pedestrians and other objects in order to understand traffic patterns and abnormalities. Or, it might want to do real-time analysis of satellite data to detect different objects, such as damaged homes.

That’s why Esri is talking to Microsoft about the possibility of using Project Brainwave for more efficient and cost-effective real-time AI analysis.

“We can definitely see the potential, especially with real-time AI,” Maher said.

Top image: Doug Burger holds an example of the hardware used for Project Brainwave. Photo by Scott Eklund/Red Box Pictures.

Project Brainwave – Related links:

- Read more about Build 2018

- See all the AI announcements by Microsoft at Build 2018

- Learn more about Project Brainwave on GitHub

- Video: Learn more about Project Brainwave

- Podcast: Clouds, catapults and life after the end of Moore’s Law with Dr. Doug Burger